In container environments (Docker or Kubernetes) we need to deploy quickly, and the most important thing for this is their size. We must reduce them so that the download of them from the registry and their execution is slower the larger the container is and that minimally affects the complexity of the relations between services. For a demonstration, we going to use a PowerDNS-based solution, I find that the original PowerDNS-Admin service container (https://github.com/ngoduykhanh/PowerDNS-Admin/blob/master/docker/Production/Dockerfile) has the following features: In Docker Hub there are images, but few are sufficient recent or do not use the original repository so the result comes from an old version of the code. For example, the most downloaded image (really/powerdns-admin) does not take into account the modifications of the last year and does not use yarn for the nodejs layer. First step: Decide whether to create a new Docker image Sometimes it is a matter of necessity or time, in this case it has been decided to create a new image taking into account the above. As minimum requirements we need a GitHub account (https://www.github.com), a Docker Hub account (https://hub.docker.com/) and basic knowledge of git as well as advanced knowledge of creating Dockerfile. In this case, https://github.com/aescanero/docker-powerdns-admin-alpine and https://cloud.docker.com/repository/docker/aescanero/powerdns-admin is created and linked to automatically create the images when a Dockerfile is uploaded to GitHub. Second step: Choose the base of a container Using a base of very small size and oriented to the reduction of each of the components (executables, libraries, etc.) that are going to be used is the minimum requirement to reduce the size of a container, and the choice should always be to use Alpine (https://alpinelinux.org/). In addition to the points already indicated, standard distributions (based on Debian or RedHat) require a large number of items for package management, base system, auxiliary libraries, etc. Alpine eliminates these dependencies and provides a simple package system that allows identifying which package groups have been installed together to be able to eliminate them together (very useful for developments as we will see later) Using Alpine can reduce the size and deployment time of a container by up to 40%. Another option of recent appearance to consider is Redhat UBI, especially interesting is ubi-minimal (https://www.redhat.com/en/blog/introducing-red-hat-universal-base-image). Third step: Install minimum packages One of Alpine’s strengths is a simple and efficient package system that in addition to reducing the size reduces the creation time of the container. The […]

Category: Series

Series of explanatory articles on a technological theme with a common objective.

K3s: Simplify Kubernetes

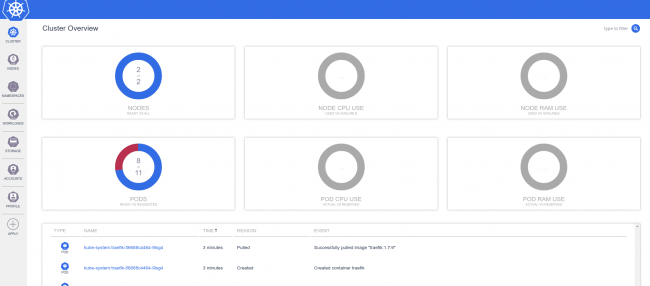

What is K3s? K3s (https://k3s.io/) is a Kubernetes solution created by Rancher Labs (https://rancher.com/) that promises easy installation, few requirements and minimal memory usage. For the approach of a Demo/Development environment this becomes a great improvement on what we have talked about previously at Kubernetes: Create a minimal environment for demos , where we can see that the creation of the Kubernetes environment is complex and requires too many resources even if Ansible is the one who performs the difficult work. We will see if what is presented to us is true and if we can include the Metallb tools that will allow us to emulate the power of the Cloud providers balancers and K8dash environments that will allow us to track the infrastructure status. K3s Download We configure the virtual machines in the same way as for Kubernetes, with the installation of dependencies: We download the latest version of k3s from https://github.com/rancher/k3s/releases/latest/download/k3s and put it in /usr/bin with execution permissions. We must do it in all the nodes. What is K3s? K3s includes three “extra” services that will change the initial approach we use for Kubernetes, the first is Flannel, integrated into K3s will make the entire layer of internal network management of Kubernetes, although it is not as complete in features as Weave (for example multicast support) it complies with being compatible with Metallb. A very complete comparison of Kubernetes network providers can be seen at https://rancher.com/blog/2019/2019-03-21-comparing-kubernetes-cni-providers-flannel-calico-canal-and-weave/ . The second service is Traefik that performs input functions from outside the Kubernetes cluster, it is a powerful reverse proxy/balancer with multiple features that will perform at the Network Layer 7, running behind Metallb that will perform the functions of network layer 3 as balancer. The last “extra” service of K3s is servicelb, which allows us to have an application load balancer, the problem with this service is that it works in layer 3 and this is the same layer where metallb work, so we cannot install it. K3s Master Install On the first node (which will be the master) to be installed, we execute /usr/bin/k3s server –no-deploy servicelb –bind-address IP_MACHINE, if we want the execution to be carried out every time the machine starts we need to create a service file /etc/systemd/system/k3smaster.service And then, we execute To launch K3s and install the master node, at the end of the installation (about 20 seconds), we will save the contents of […]

Kubernetes: Create a minimal environment for demos

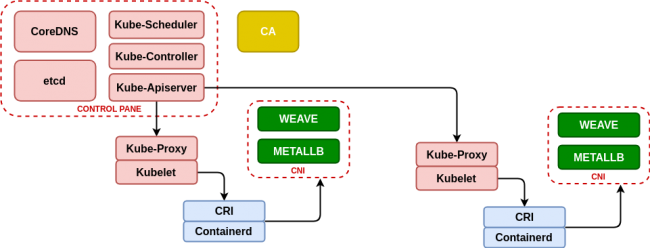

Every day more business environments are making a migration to Cloud or Kubernetes/Openshift and it is necessary to meet these requirements for demonstrations. Kubernetes is not a friendly environment to carry it in a notebook with medium capacity (8GB to 16GB of RAM) and less with a demo that requires certain resources. Deploy Kubernetes on kubeadm, containerd, metallb and weave This case is based on the Kubeadm-based deployment (https://kubernetes.io/docs/setup/production-environment/tools/kubeadm/create-cluster-kubeadm/) for Kubernetes deployment, using containerd (https://containerd.io/) as the container life cycle manager and to obtain a minimum network management we will use metallb (https://metallb.universe.tf/) that will allow us to emulate the power of the cloud providers balancers (as AWS Elastic Load Balancer) and Weave (https://www.weave.works/blog/weave-net-kubernetes-integration/) that allows us to manage container networks and integrate seamlessly with metallb. Finally, taking advantage of the infrastructure, we deploy the real-time resource manager K8dash (https://github.com/herbrandson/k8dash) that will allow us to track the status of the infrastructure and the applications that we deploy in it. Although the Ansible roles that we have used before (see https://github.com/aescanero/disasterproject) allow us to deploy the environment with ease and cleanliness, we will examine it to understand how the changes we will use in subsequent chapters (using k3s) have an important impact on the availability and performance of the deployed demo/development environment. First step: Install Containerd The first step in the installation is the dependencies that Kubernetes has and a very good reference about them is the documentation that Kelsey Hightower makes available to those who need to know Kubernetes thoroughly (https://github.com/kelseyhightower/kubernetes-the-hard-way), especially of all those who are interested in Kubernetes certifications such as CKA (https://www.cncf.io/certification/cka/). We start with a series of network packages We install the container life manager (a Containerd version that includes CRI and CNI) and take advantage of the packages that come with the Kubernetes network interfaces (CNI or Container Network Interface) The package includes the service for systemd so it is enough to start the service: Second Step: kubeadm and kubelet Now we download the executables of kubernetes, in the case of the first machine to configure it will be the “master” and we have to download the kubeadm binaries (the installer of kubernetes), kubelet (the agent that will connect with containerd on each machine. To know which one is the stable version of kubernetes we need to execute: And we download the binaries (in the master all and in the nodes only kubelet is necessary) […]

Choose between Docker or Podman for test and development environments

When we must choose between Docker or Podman? A lot of times we find that there are very few resources and we need an environment to perform a complete product demonstration at customer. In those cases we’ll need to simulate an environment in the simplest way possible and with minimal resources. For this we’ll adopt containers, but which is the best solution for those small environments? Docker Docker is the standard container environment, it is the most widespread and put together a set of powerful tools such as a client on the command line, an API server, a container lifecycle manager (containerd), and a container launcher (runc). Install docker is easy, since docker supplies a script that execute the process of prepare and configure the necessary requirements and repositories and finally installs and configures docker leaving the service ready to use. Podman Podman is a container environment that does not use a service and therefore does not have an API server, requests are made only from the command line, which has advantages and disadvantages that we will explain at the article. Install podman is easy in a Centos environment (yum install -y podman for Centos 7 and yum install -y container-tools for Centos 8) but you need some work in a Debian environment: Deploy with Ansible In our case we have used the Ansible roles developed at https://github.com/aescanero/disasterproject, to deploy two virtual machines, one with podman and the other with docker. In the case of using a Debian based distribution we must to install Ansible: We proceed to download the environment and configure Ansible: Edit the inventory.yml file which must have the following format: There are some global variables that hang from “vars:”, which are: The format of each machine is defined by the following attributes: We deploy the environment with: How to access As a result, we obtain two virtual machines that we can access via ssh with ssh -i files/insecure_private_key vagrant@VIRTUAL_MACHINE_IP, in each of them we proceed to launch a container, docker run -td nginx and podman run -dt nginx. Once the nginx containers are deployed, we analyze what services are executed in each case. Results of the comparison Podman, start only a process to launch nginx: About the size of the virtual machines there is only 100 MB of difference between the two: In conclusion we have the following points: Docker Podman Life cycle management, for example […]

Linux virtual machine with KVM from the command line

If we want to raise virtual machines in a Linux environment that does not have a graphical environment, we can raise virtual machines using the command line with a XML template. This article explains how the deployment performed with Ansible-libvirt at KVM, Ansible and how to deploy a test environment works internally Install Qemu-KVM and Libvirt First: we must to install libvirt and Qemu-KVM. In Ubuntu / Debian is installed with: And in CentOS / Redhat with: To launch the service we must do: $ sudo systemctl enable libvirtd && sudo systemctl start libvirtd Configure a network template Libvirt provides us with a powerful tool for managing virtual machines called ‘virsh’, which we must use to be able to manage KVM virtual machines from the command line. For a virtual machine we mainly need three elements, the first is a network configuration that provides IP to virtual machines via DHCP. To do this libvirt needs XML template like the next template (which we will designate “net.xml”): Whose main elements are: NETWORK_NAME: Descriptive name that we are going to use to designate the network, for example, “test_net” or “production_net”. BRIDGE_NAME: Each network creates an interface on the host server that will serve as gateway of the input/output packets of that previous network to the outside. Here we assign a descriptive name that let as identify the interface. IP_HOST: The IP that such interface will have on the host server and that will be the gateway of the virtual machines. NETWORK_MASK: Depends on the network, usually for testing must be use a class C (255.255.255.0) BEGIN_DHCP_RANGE: To assign IPs to virtual machines using libvirt, there are an internal DHCP server (dnsmasq based), here we define the first IP of the range that we can serve to virtual machines. END_DHCP_RANGE: And here we define the last IP that virtual machines can obtain. Preparing the operating system image The second element is the virtual machine image, this image can be created or downloaded, the second is recommended to reduce the deployment time. An image source for virtual machines with KVM / libvirt is Vagrant (https://app.vagrantup.com/boxes/search?provider=libvirt), to obtain an image of the virtual machine that interests us, we must download from https://app.vagrantup.com/APP_NAME/boxes/APP_TAG/versions/APP_VERSION/providers/libvirt.box where APP_NAME is the name of the application we want to download (e.g. debian), APP_TAG is the distribution of this application (e.g. stretch64) and finally APP_VERSION is the version of the application (e.g. […]

KVM, Ansible and how to deploy a test environment

We will always follow the philosophy “KISS” (keep it simple stupid!) “For the creation of local development environments with virtual machines, we will focus on the performance that KVM gives us and Ansible capabilities.