One of the most interesting details that I found when using K3s (https://k3s.io/) is the way to deploy Traefik, in which it uses a Helm chart (note: in Kubernetes the command to execute is sudo kubectl, but in k3s is sudo k3s kubectl because it is integrated to use minimal resources).

$ sudo k3s kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

...

kube-system helm-install-traefik-ksqsj 0/1 Completed 0 10m

kube-system traefik-9cfbf55b6-5cds5 1/1 Running 0 9m28s

$ sudo k3s kubectl get jobs -A

NAMESPACE NAME COMPLETIONS DURATION AGE

kube-system helm-install-traefik 1/1 50s 12mWe found that helm is not installed, but we can see a job running the helm client so that we can have its power without the need to have tiller running (the helm server) that uses resources that we can save, but how does it work?

Table of Contents

Klipper Helm

The first detail we can see is the use of a job (a task that is usually executed only once as a container) based on the image “rancher/klipper-helm” (https://github.com/rancher/klipper-helm) running a helm environment by simply downloading it and running a single script: https://raw.githubusercontent.com/rancher/klipper-helm/master/entry

As a requirement you are going to require a system account with administrator permissions in the kube-system namespace, for “traefik” it is:

$ sudo k3s kubectl get clusterrolebinding helm-kube-system-traefik -o yaml

...

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: helm-traefik

namespace: kube-systemWhat we must take into account is the need to create the service account and at the end of the installation task with helm remove it since it will not be necessary until another removal or update helm operation.

As an example we will create a task to install a weave-scope service using the helm chart (https://github.com/helm/charts/tree/master/stable/weave-scope)

Service Creation

We create a workspace to isolate the new service (namespace in kubernetes, project in Openshift) that we will call helm-weave-scope:

$ sudo k3s kubectl create namespace helm-weave-scope

namespace/helm-weave-scope createdWe create a new system account and assign the administrator permissions:

$ sudo k3s kubectl create serviceaccount helm-installer-weave-scope -n helm-weave-scope

serviceaccount/helm-installer-weave-scope created

$ sudo k3s kubectl create clusterrolebinding helm-installer-weave-scope --clusterrole=cluster-admin --serviceaccount=helm-weave-scope:helm-installer-weave-scope

clusterrolebinding.rbac.authorization.k8s.io/helm-installer-weave-scope createdOur next step is to create the task, for this we create it in the task.yml file:

---

apiVersion: batch/v1

kind: Job

metadata:

name: helm-install-weave-scope

namespace: helm-weave-scope

spec:

backoffLimit: 1000

completions: 1

parallelism: 1

template:

metadata:

labels:

jobname: helm-install-weave-scope

spec:

containers:

- args:

- install

- --namespace

- helm-weave-scope

- --name

- helm-weave-scope

- --set-string

- service.type=LoadBalancer

- stable/weave-scope

env:

- name: NAME

value: helm-weave-scope

image: rancher/klipper-helm:v0.1.5

name: helm-weave-scope

serviceAccount: helm-installer-weave-scope

serviceAccountName: helm-installer-weave-scope

restartPolicy: OnFailure

Execution

And we execute it with:

$ sudo k3s kubectl apply -f tarea.yml

job.batch/helm-install-weave-scope createdWhat will launch all the processes that the chart has:

# k3s kubectl get pods -A -w

NAMESPACE NAME READY STATUS RESTARTS AGE

helm-weave-scope helm-install-weave-scope-vhwk2 1/1 Running 0 9s

helm-weave-scope weave-scope-agent-helm-weave-scope-lrfs2 0/1 Pending 0 0s

helm-weave-scope weave-scope-agent-helm-weave-scope-drl8v 0/1 Pending 0 0s

helm-weave-scope weave-scope-agent-helm-weave-scope-lrfs2 0/1 Pending 0 0s

helm-weave-scope weave-scope-agent-helm-weave-scope-drl8v 0/1 Pending 0 0s

helm-weave-scope weave-scope-frontend-helm-weave-scope-844c4b9f6f-d22mn 0/1 Pending 0 0s

helm-weave-scope weave-scope-frontend-helm-weave-scope-844c4b9f6f-d22mn 0/1 Pending 0 0s

helm-weave-scope weave-scope-agent-helm-weave-scope-lrfs2 0/1 ContainerCreating 0 1s

helm-weave-scope weave-scope-agent-helm-weave-scope-drl8v 0/1 ContainerCreating 0 1s

helm-weave-scope weave-scope-frontend-helm-weave-scope-844c4b9f6f-d22mn 0/1 ContainerCreating 0 1s

helm-weave-scope helm-install-weave-scope-vhwk2 0/1 Completed 0 10s

helm-weave-scope weave-scope-agent-helm-weave-scope-lrfs2 1/1 Running 0 13s

helm-weave-scope weave-scope-agent-helm-weave-scope-drl8v 1/1 Running 0 20s

helm-weave-scope weave-scope-frontend-helm-weave-scope-844c4b9f6f-d22mn 1/1 Running 0 20sResult

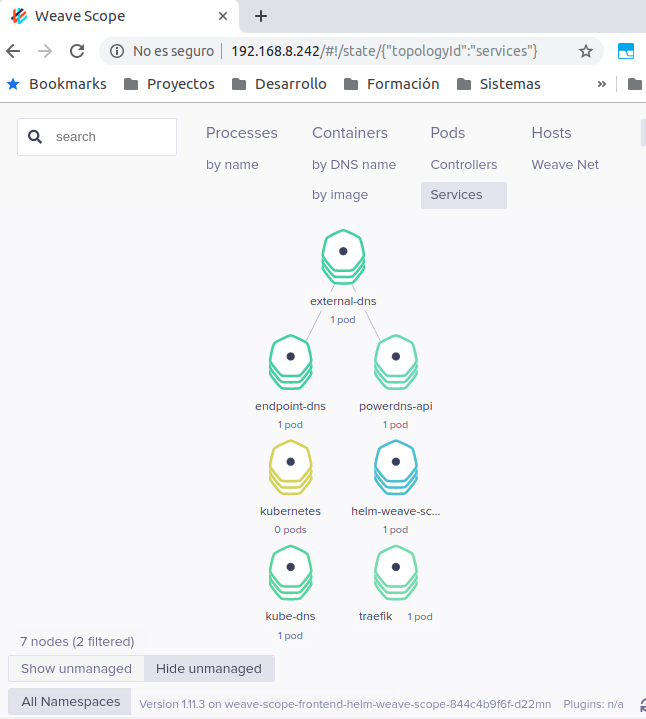

We can observe the correct installation of the application without the need to install Helm or tiller running on the system:

# k3s kubectl get services -A -w

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

helm-weave-scope helm-weave-scope-weave-scope LoadBalancer 10.43.182.173 192.168.8.242 80:32567/TCP 7m5s

Update

With the arrival of Helm v3, Tiller is not necessary and now is much simpler to use. To explain its operation, see Helm v3 to deploy PowerDNS over Kubernetes.